It can be regarded as a compressed format of the input. The encoder processes the input and produces one compact representation, called z, from all the input timesteps. The encoder and decoder are nothing more than stacked RNN layers, such as LSTM’s. Sounds obvious and optimal? Transformers proved us it’s not! A high-level view of encoder and decoder The reason is simple: we liked to treat sequences sequentially. In case you are wondering, recurrent neural networks ( RNNs) dominated this category of tasks. The two sequences can be of the same or arbitrary length. The goal is to transform an input sequence (source) to a new one (target). For instance, text representations, pixels, or even images in the case of videos. The elements of the sequence x 1, x 2 x_1, x_2 x 1 , x 2 , etc. Sequence to sequence learningīefore attention and transformers, Sequence to Sequence ( Seq2Seq) worked pretty much like this: Attention became popular in the general task of dealing with sequences. So, since we are dealing with “sequences”, let’s formulate the problem in terms of machine learning first. The attention mechanism emerged naturally from problems that deal with time-varying data (sequences). ~ Alex Graves 2020 Īlways keep this in the back of your mind. Let’s start from the beginning: What is attention? Glad you asked! After this article, we will inspect the transformer model like a boss. In NLP, transformers and attention have been utilized successfully in a plethora of tasks including reading comprehension, abstractive summarization, word completion, and others.Īfter a lot of reading and searching, I realized that it is crucial to understand how attention emerged from NLP and machine translation. Now they managed to reach state-of-the-art performance in ImageNet. Honestly, transformers and attention-based methods were always the fancy things that I never spent the time to study. Also encourage your child to ask you questions if they do not understand, for example ‘I don’t know that word, what does X mean?’ or ‘do you mean…?’ This will highlight that an important and helpful part of listening is also asking questions.I have always worked on computer vision applications. Encourage your child to listen carefully so that the pictures are identical. Give your child instructions so that he/she draws the picture the same as yours. Draw a simple picture without letting your child see it. Barrier Games – Take a piece of paper each.Your child needs to check that what they are listening to makes sense. Make the game harder by giving longer instructions or impossible instructions (e.g. Your child has to listen carefully, if you do not say ‘Simon says’ then they do not follow the instruction.

Simon Says – Give instructions such as ‘Simon says…put your hands on your head then touch the floor’.Praise your child for good listening – be specific about what they have done well.Check your child has listened to instructions or information by asking them to explain in their own words what you have said.Give time frames for how long your child has to attend to a task, for example, when doing homework set an egg timer for a realistic amount of time and after this they can have a break.

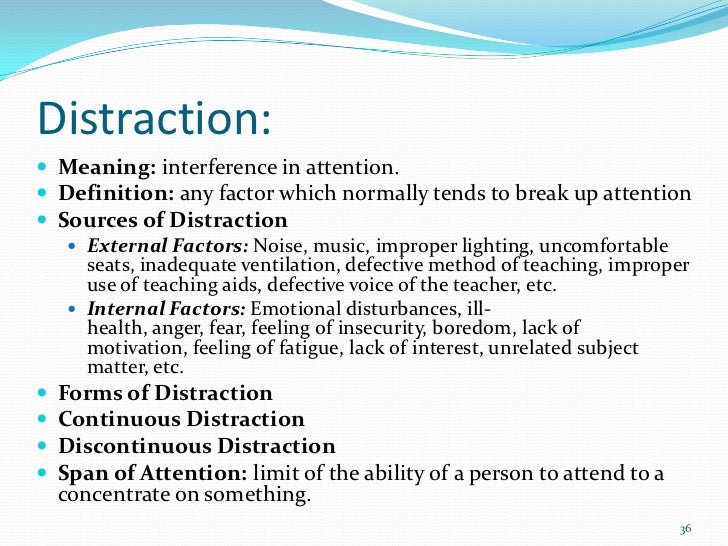

This can help focus their attention and listening. Use visuals such as gestures, photos and objects alongside what you say.Keep your language short and simple so your child does not need to listen for too long.This lets them know that they need to listen. Say your child’s name before telling them something.They may try to begin an activity before hearing the full instruction.They may need instructions regularly repeated.They may interrupt other people talking and find it difficult to wait their turn.They may find it difficult to do an activity and listen to spoken information at the same time.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed